CrashLoopBackOff is a common error in Kubernetes, indicating a pod constantly crashing in an endless loop.

Here at Ibmi Media, we shall look into ways to resolve CrashLoopBackOff error in Kubernetes.

What triggers CrashLoopBackOff Kubernetes Error ?

You can identify this error by running the kubectl get pods command – the pod status will show the error like this:

NAME READY STATUS RESTARTS AGE

ibmimedia-pod-1 0/1 CrashLoopBackOff 2 1m20sMain Causes of CrashLoopBackOff Error includes:

- Insufficient resources — lack of resources prevents the container from loading.

- Locked file — a file was already locked by another container.

- Locked database — the database is being used and locked by other pods.

- Failed reference — reference to scripts or binaries that are not present on the container.

- Setup error — an issue with the init-container setup in Kubernetes.

- Config loading error — a server cannot load the configuration file.

- Misconfigurations — a general file system misconfiguration.

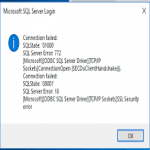

- Connection issues — DNS or kube-DNS is not able to connect to a third-party service.

- Deploying failed services — an attempt to deploy services/applications that have already failed (e.g. due to a lack of access to other services).

Diagnosis and Resolution of CrashLoopBackOff Error

The best way to identify the root cause of the error is to go through the list of potential causes one by one, beginning with the most common ones.

1. Search for "Back Off Restarting Failed Container"

- Firstly, run kubectl describe pod [name].

- If the kubelet sends us Liveness probe failed and Back-off restarting failed container messages, it means the container is not responding and is in the process of restarting.

- If we receive the back-off restarting failed container message, it means that we are dealing with a temporary resource overload as a result of a spike in activity.

- To give the application a larger window of time to respond, adjust periodSeconds or timeoutSeconds.

If this was not the problem, move on to the next step.

2. Search for the logs from the previous container instance

If the pod details didn't reveal anything, we should look at the information from the previous container instance. To get the last ten log lines from the pod, run the following command:

$ kubectl logs --previous --tail 10Then, look through the log for clues as to why the pod keeps crashing. If we can't solve the problem, we'll move on to the next step.

3. Check the Deployment Logs

Firstly, to get the kubectl deployment logs, run the following command:

$ kubectl logs -f deploy/ -nThis could also reveal problems at the application level.

Finally, if all of the above fails, we’ll perform advanced debugging on the container that’s crashing.

Further Debugging: CrashLoop Container Bashing

To gain direct access to the CrashLoop container and identify and resolve the issue that caused it to crash, follow the steps below:

1. Determine the entrypoint and cmd

To debug the container, we'll need to figure out what the entrypoint and cmd are.

Perform the following actions:

- Firstly, to pull the image, type docker pull [image-id].

- Then, run Docker inspect [image-id] to find the container image's entrypoint and cmd.

2. Change the entrypoint

We'll need to temporarily change the entrypoint in the container specification to tail -f /dev/null because the container has crashed and won't start.

3. Set up debugging software

We should be able to use the default command line kubectl to execute into the buggy container. Make sure we have debugging tools installed (e.g., curl or vim) or add them. We can use this command in Linux to install the tools we require:

$ sudo apt-get install [name of debugging tool]4. Verify that no packages or dependencies are missing.

Check for any missing packages or dependencies that are preventing the app from starting. If any packages or dependencies are missing, provide them to the application and see if it resolves the error. Proceed to the next step if no missing files are there or if the error persists.

5. Verify the application's settings

Examine the environment variables to ensure they are correct. If that isn't the case, the configuration files may be missing, resulting in the application failing. We can use Curl to download missing files.

If any configuration changes are required, such as the username and password for the database configuration file, we can do so with vim. We'll need to look into some of the less common causes, If the problem was not caused by missing files or configuration.

How to Avoid CrashLoopBackOff Error ?

1. Configure and double-check the files

The CrashLoopBackOff error can be caused by a misconfigured or missing configuration file, preventing the container from starting properly. Before deploying, ensure that all files are present and properly configured.

Files are typically stored in /var/lib/docker. To see if the target file exists, we can use commands like ls and find. We can also investigate files with cat and less to ensure that there are no misconfiguration issues.

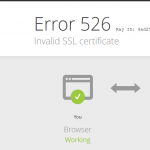

2. Be Wary of Third-Party Services

If an application uses a third-party service and calls to that service fail, the problem is with the service itself. Issues with the SSL certificate or network issues are the cause of most of the errors. So, we need to ensure that both are operational. To test, we can log into the container and use curl to manually reach the endpoints.

3. Examine the Environment Variables

The CrashLoopBackOff error is frequently caused by incorrect environment variables. Containers that require Java to run frequently have their environment variables incorrectly set. So, check the environment variables with env to ensure they are correct.

4. Examine Kube-DNS

The application could be attempting to connect to an external service, but the kube-dns service is not operational. We simply need to restart the kube-dns service in order for the container to connect to the external service.

5. Check for File Locks

As previously stated, file locks are a common cause of the CrashLoopBackOff error. So, ensure that we inspect all ports and containers to ensure that none are being used by the incorrect service. If they are, terminate the service that is occupying the required port.

[Need assistance in fixing Kubernetes issues ? We can help you. ]

Conclusion

This article covers ways to tackle and avoid the CrashLoopBackOff error in Kubernetes. In fact, CrashLoopBackOff is a status message that indicates one of your pods is in a constant state of flux— one or more containers are failing and restarting repeatedly. This typically happens because each pod inherits a default restartPolicy of Always upon creation.

Examples of why a pod would fall into a CrashLoopBackOff state include:

- Errors when deploying Kubernetes.

- Missing dependencies.

- Changes caused by recent updates.

Activity process common to the discovery-to-fix a CrashLoopBackOff message:

- The discovery process: This includes learning that one or more pods are in the restart loop and witnessing the apps contained therein either offline or just performing below optimal levels.

- Information gathering: Immediately after the first step, most engineers will run a kubectl get pods command to learn a little more about the source of the failure. Common output from this is a list of all pods along with their current state

- Drill down on specific pod(s): Once you know which pods are in the CrashLoopBackOff state, your next task is targeting each of them to get more details about their setup. For this, you can run the kubectl describe pod [variable] command with the name of your target pod as the command variable.

- Once you've reached this point, several keywords should stick out. Focusing on these should make light work of decoding the list of variables around your pod.

This article covers ways to tackle and avoid the CrashLoopBackOff error in Kubernetes. In fact, CrashLoopBackOff is a status message that indicates one of your pods is in a constant state of flux— one or more containers are failing and restarting repeatedly. This typically happens because each pod inherits a default restartPolicy of Always upon creation.

Examples of why a pod would fall into a CrashLoopBackOff state include:

- Errors when deploying Kubernetes.

- Missing dependencies.

- Changes caused by recent updates.

Activity process common to the discovery-to-fix a CrashLoopBackOff message:

- The discovery process: This includes learning that one or more pods are in the restart loop and witnessing the apps contained therein either offline or just performing below optimal levels.

- Information gathering: Immediately after the first step, most engineers will run a kubectl get pods command to learn a little more about the source of the failure. Common output from this is a list of all pods along with their current state

- Drill down on specific pod(s): Once you know which pods are in the CrashLoopBackOff state, your next task is targeting each of them to get more details about their setup. For this, you can run the kubectl describe pod [variable] command with the name of your target pod as the command variable.

- Once you've reached this point, several keywords should stick out. Focusing on these should make light work of decoding the list of variables around your pod.