Sometimes, Linux users experience error "Too many open files" when the server is having a high load. This makes opening multiple files an issue.

Here at Ibmi Media, as part of our Server Management Services, our Server Administration Experts regularly help our Customers to solve Linux related issues.

In this context, we will learn how to find the limit of maximum number of open files set by Linux and how we alter it for an entire host, individual service or a current session.

Nature of Linux error "Too Many Open Files"?

By default, in a Linux environment, the limit of the numbers of open file is controlled. This restricts a number of resources a process can use.

Relatively, error such as "Too Many Open Files" affects servers with an installed NGINX/httpd web server or a database server (MySQL/MariaDB/PostgreSQL).

For instance, in a process where an Nginx web server exceeds the open file limit, you will see an error message such as this;

socket () failed (29: Too many open files) while connecting to upstreamIn order to find the maximum number of file descriptors a system can open, execute the following command;

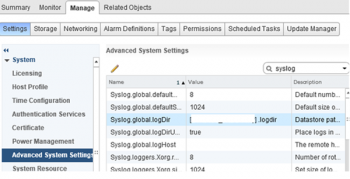

cat /proc/sys/fs/file-maxBy default, the open file limit for a current user is 1024. To verify this, execute the command;

ulimit -n [root@server /]# cat /proc/sys/fs/file-max 97816 [root@server /]# ulimit -n 1024Also by default, two limit types exists in Linux, namely Hard and Soft. Basically, any user can change a soft limit value but only a privileged or root user can modify a hard limit value.

However, note that the soft limit value cannot exceed the hard limit value.

To see the soft limit value, run the command;

ulimit –nSTo display the hard limit value, run the following command;

ulimit -nHMore about "Too Many Open Files" error & Open File Limits in Linux?

Now we understand that these titles mean that a process has opened too many files (file descriptors) and cannot open new ones. In Linux, the maximum open file limits are set by default for each process or user and the values are rather small.

We, at Ibmi Media have monitored this closely and have come up with a few solutions:

1. Increase the Max Open File Limit in Linux

As a standard, a large number of files can be opened if we change the limits in our Linux OS. In order to make new settings permanent and prevent their reset after a server or session restart, make changes to "/etc/security/limits.conf".

Therefore, Add the following lines;

hard nofile 97816

soft nofile 97816For Ubuntu Linux, add the following line as well;

session required pam_limits.soThese parameters allow to set open file limits after user authentication.

After making the changes, reload the terminal and check the max_open_files value:

ulimit -n 97816

2. Increase the Open File Descriptor Limit per service

A modification in the limit of open file descriptors for a specific service, rather than for an entire operating system is possible.

For instance, if we take Apache, to change the limits, open the service settings using systemctl:

systemctl edit httpd.serviceOnce the service settings is open, add the limits required. For example;

[Service] LimitNOFILE=16000 LimitNOFILESoft=16000After making the changes, update the service configuration and restart it:

systemctl daemon-reload # systemctl restart httpd.serviceTo ensure the values have changed, get the service PID:

systemctl status httpd.serviceFor example, if the service PID is 4262:

cat /proc/4262/limits | grep “Max open files”Thus, we can change the values for the maximum number of open files for a specific service.

3. Set Max Open Files Limit for Nginx & Apache

In addition to changing the limit on the number of open files to a web server, we should change the service configuration file.

For example, specify/change the following directive value in the Nginx configuration file /etc/nginx/nginx.conf:

worker_rlimit_nofile 16000While configuring Nginx on a highly loaded 8-core server with worker_connections 8192, we need to specify

8192*2*8 (vCPU) = 131072 in worker_rlimit_nofileThen restart Nginx.

For apache, create a directory:

mkdir /lib/systemd/system/httpd.service.d/Then create the limit_nofile.conf file:

nano /lib/systemd/system/httpd.service.d/limit_nofile.confAdd to it:

[Service] LimitNOFILE=16000Do not forget to restart httpd.

4. Alter the Open File Limit for Current Session

To begin with, execute the command:

ulimit -n 3000Once the terminal is closed and a new session is created, the limits will get back to the original values specified in /etc/security/limits.conf.

To change the general value for the system /proc/sys/fs/file-max, change the fs.file-max value in /etc/sysctl.conf:

fs.file-max = 100000Finally, apply:

# sysctl -p [root@server /]# sysctl -p net.ipv4.ip_forward = 1 fs.file-max = 200000 [root@server /]# cat /proc/sys/fs/file-max 200000[In a situation where all the above tips do not solve the issue, then consult our Experienced Tech Team working round the clock to help you fix the Linux issue.]

Conclusion

This article will guide you on how to fix Linux error "Too Many Open Files" and method of changing the default limits set by Linux.

This article will guide you on how to fix Linux error "Too Many Open Files" and method of changing the default limits set by Linux.