WordPress error Failed to Load Resource - Fix it Now ?

This article covers how to resolve the WordPress error Failed to load resource in WordPress as a result of issues in WordPress URL settings.

To fix this WordPress error:

1. Replace The Missing Resource

The missing resource is an image in one of your blog posts or page, then try to look for it in the media library.

If you are able to see the media library, then try to add again by editing the post or page.

2. Replace theme or plugin files

In case, if the failed resource is a WordPress plugin or theme file, then the easiest way to replace it is by reinstalling the plugin or theme.

First, you need to deactivate your current WordPress theme.

All you have to do is visit the Appearance » Themes page.

Googlebot cannot access CSS and JS files – Resolve crawl errors ?

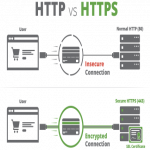

This guide covers website crawl errors, Googlebot cannot access CSS and JS files. Google bot and other search spiders will visit the robots.txt file of your website immediately after they hit the htaccess file.

Htaccess has rules to block ip addresses, redirect URLs, enable gzip compression, etc. The robots.txt will have a set of rules for the search engines too.

They are the reason you received "Googlebot Cannot Access CSS and JS files".

Robots.txt has few lines that will either block or allow crawling of files and directories. Google has started penalizing websites that block the crawling of js and css files.

The JavaScript and cascading style sheets are responsible for rendering your website and they handle forms, fire events, and so on.

If the JS is blocked, Google bot will not be able to crawl the code and it will consider the code as a spam or violation of link schemes.

The same logic applies for the CSS files.

To resolve "Googlebot Cannot Access CSS And JS Files" Warning:

1. You need to remove following line: Disallow: /wp-includes/

Depending upon how you have configured your robots.txt file, it will fix most of the warnings.

You will most likely see that your site has disallowed access to some WordPress directories like this:

User-agent: *

Disallow: /wp-admin/Disallow: /wp-includes/Disallow: /wp-content/plugins/Disallow: /wp-content/themes/2. You can override this in robots.txt by allowing access to blocked folders:

User-agent: *Allow: /wp-includes/js/

WordPress error Class wp_theme not found - Fix it now

This article covers method to resolve 'WordPress: Class wp_theme not found' error for our customers.

To perform a manual WordPress upgrade:

1. Get the latest WordPress zip (or tar.gz) file.

2. Unpack the zip file that you downloaded.

3. Deactivate plugins.

4. Delete the old wp-includes and wp-admin directories on your web host (through your FTP or shell access).

5. Using FTP or your shell access, upload the new wp-includes and wp-admin directories to your web host, overwriting old files.

6. Upload the individual files from the new wp-content folder to your existing wp-content folder, overwriting existing files. Do NOT delete your existing wp-content folder. Do NOT delete any files or folders in your existing wp-content directory (except for the one being overwritten by new files).

7. Upload all new loose files from the root directory of the new version to your existing WordPress root directory.

However, if you did not perform step 7, you would see this error message when trying to complete your upgrade:

Class WP_Theme not found in theme.php on line 106

Hence, to avoid this issue, or to fix this issue, make sure you perform step 7 and continue on the remaining steps for the manual WordPress updating process.

WordPress 405 Method Not Allowed Error - Fix it now

This article covers different methods to troubleshoot 405 Method Not Allowed Error on WordPress.

The 405 Method Not Allowed WordPress error occurs when the web server is configured in a way that does not allow you to perform a specific action for a particular URL.

It's an HTTP response status code that indicates that the request method is known by the server but is not supported by the target resource.

To Fix 405 Method Not Allowed Errors:

1. Comb through your website's code to find bugs.

If there's a mistake in your website's code, your web server might not be able to correctly answer requests from a content delivery network. Comb through your code to find bugs or copy your code into a development machine.

2. Sift through your server-side logs.

There are two types of server-side logs -- applications logs and server logs. Application logs recount your website's entire history, like the web pages requested by visitors and which servers it connected to.

3. Check your server configuration files.

The last way to find out what's causing your 405 Method Not Allowed Error is by taking a look at your web server's configuration files.

The WordPress Missed Schedule post Error - Methods to fix it

This article covers how to easily fix the missed schedule post error in WordPress. WordPress scheduled posts not publishing can be resolved by using a plugin such as "WordPress cron jobs" to help trigger scheduled posts to publish your WordPress Post.

Cron is a technical term for commands that run on a scheduled time, like your scheduled posts in WordPress.

Technically, a real cron job will run at the server level. But because WordPress doesn't have access to that level, it runs a simulated cron.

These simulated cron jobs, like scheduled posts, are supposed to be triggered whenever a person or bot visits your site.

But because it's not a real cron job, sometimes it causes a missed schedule error.

TO FIX THE MISSED SCHEDULE ERROR IN WORDPRESS:

Every fifteen minutes the post scheduler plugin checks for posts that have the missed schedule error, and will automatically publish them for you.

Multiple techniques for checking your site's missed posts are used to make sure a scheduled post is not missed.

Methods to fix the WordPress missed schedule error:

1. Use the Scheduled Post Trigger plugin.

2. Manage cron jobs directly through your server.

WordPress 401 error - Fix it now

This article covers easy to follow methods to resolve WordPress 401 error.

The 401 error has multiple names including Error 401 and 401 unauthorized error.

These errors are sometimes accompanied by a message ‘Access is denied due to invalid credentials’ or ‘Authorization required’.

To fix the 401 error in WordPress:

1. Temporarily Remove Password Protection on WordPress Admin

2. Clear Firewall Cache to Solve 401 Error in WordPress

3. Deactivate All WordPress Plugins

4. Switch to a Default WordPress Theme

5. Reset WordPress Password